PyTorch Deep Explainer MNIST example¶

A simple example showing how to explain an MNIST CNN trained using PyTorch with Deep Explainer.

[1]:

import torch, torchvision

from torchvision import datasets, transforms

from torch import nn, optim

from torch.nn import functional as F

import numpy as np

import shap

[2]:

batch_size = 128

num_epochs = 2

device = torch.device('cpu')

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv_layers = nn.Sequential(

nn.Conv2d(1, 10, kernel_size=5),

nn.MaxPool2d(2),

nn.ReLU(),

nn.Conv2d(10, 20, kernel_size=5),

nn.Dropout(),

nn.MaxPool2d(2),

nn.ReLU(),

)

self.fc_layers = nn.Sequential(

nn.Linear(320, 50),

nn.ReLU(),

nn.Dropout(),

nn.Linear(50, 10),

nn.Softmax(dim=1)

)

def forward(self, x):

x = self.conv_layers(x)

x = x.view(-1, 320)

x = self.fc_layers(x)

return x

def train(model, device, train_loader, optimizer, epoch):

model.train()

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = F.nll_loss(output.log(), target)

loss.backward()

optimizer.step()

if batch_idx % 100 == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()))

def test(model, device, test_loader):

model.eval()

test_loss = 0

correct = 0

with torch.no_grad():

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

test_loss += F.nll_loss(output.log(), target).item() # sum up batch loss

pred = output.max(1, keepdim=True)[1] # get the index of the max log-probability

correct += pred.eq(target.view_as(pred)).sum().item()

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

train_loader = torch.utils.data.DataLoader(

datasets.MNIST('mnist_data', train=True, download=True,

transform=transforms.Compose([

transforms.ToTensor()

])),

batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(

datasets.MNIST('mnist_data', train=False, transform=transforms.Compose([

transforms.ToTensor()

])),

batch_size=batch_size, shuffle=True)

model = Net().to(device)

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

for epoch in range(1, num_epochs + 1):

train(model, device, train_loader, optimizer, epoch)

test(model, device, test_loader)

Train Epoch: 1 [0/60000 (0%)] Loss: 2.302562

Train Epoch: 1 [12800/60000 (21%)] Loss: 2.175707

Train Epoch: 1 [25600/60000 (43%)] Loss: 1.268371

Train Epoch: 1 [38400/60000 (64%)] Loss: 0.844928

Train Epoch: 1 [51200/60000 (85%)] Loss: 0.708796

Test set: Average loss: 0.0046, Accuracy: 8997/10000 (90%)

Train Epoch: 2 [0/60000 (0%)] Loss: 0.776716

Train Epoch: 2 [12800/60000 (21%)] Loss: 0.410855

Train Epoch: 2 [25600/60000 (43%)] Loss: 0.501217

Train Epoch: 2 [38400/60000 (64%)] Loss: 0.553973

Train Epoch: 2 [51200/60000 (85%)] Loss: 0.396363

Test set: Average loss: 0.0025, Accuracy: 9382/10000 (94%)

[6]:

# since shuffle=True, this is a random sample of test data

batch = next(iter(test_loader))

images, _ = batch

background = images[:100]

test_images = images[100:103]

e = shap.DeepExplainer(model, background)

shap_values = e.shap_values(test_images)

[7]:

shap_numpy = [np.swapaxes(np.swapaxes(s, 1, -1), 1, 2) for s in shap_values]

test_numpy = np.swapaxes(np.swapaxes(test_images.numpy(), 1, -1), 1, 2)

[8]:

# plot the feature attributions

shap.image_plot(shap_numpy, -test_numpy)

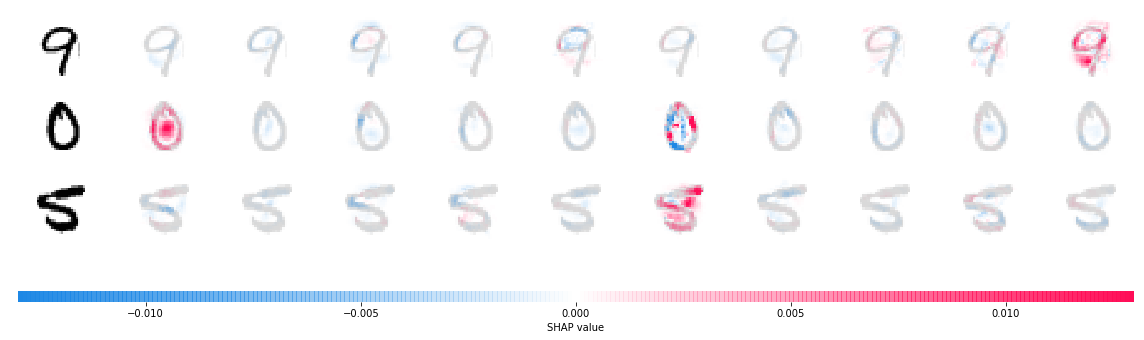

The plot above shows the explanations for each class on four predictions. Note that the explanations are ordered for the classes 0-9 going left to right along the rows.